The corresponding Avro schemas are registered with the Confluent Schema Registry instance because that’s how one creates production-ready data streams.

The application’s input data is in Avro format and comes from two sources: a stream of play events (think: “song X was just played”) and a stream of song metadata (“song X was written by artist Y”). It exposes its latest Streams processing results-the latest music charts-through Kafka’s Interactive Queries feature (see our documentation on Interactive Queries ) combined with a REST API.

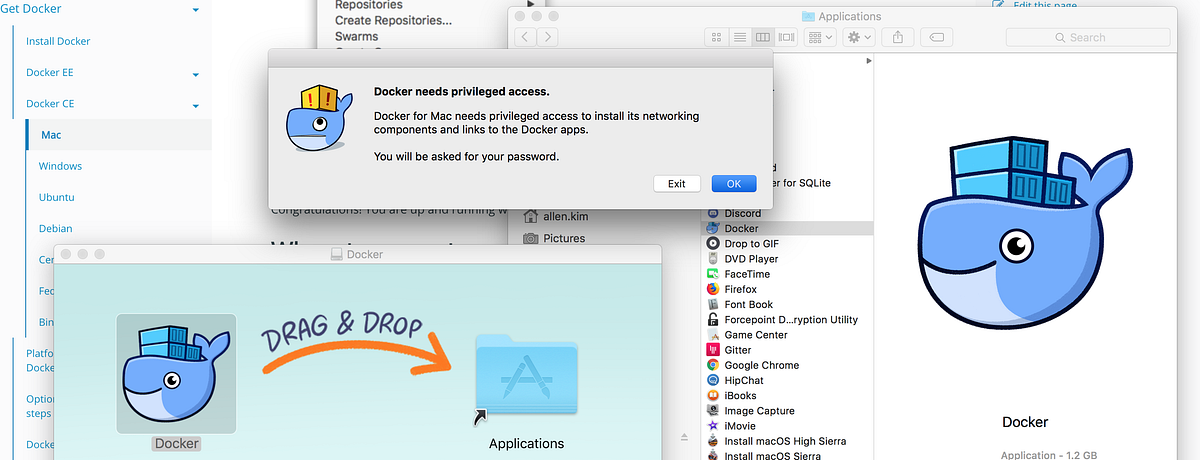

Mac os docker getting started how to#

Our Kafka Music application demonstrates how to build a music charts application that continuously computes, in real-time, the latest charts such as “Top 5 songs” per music genre.

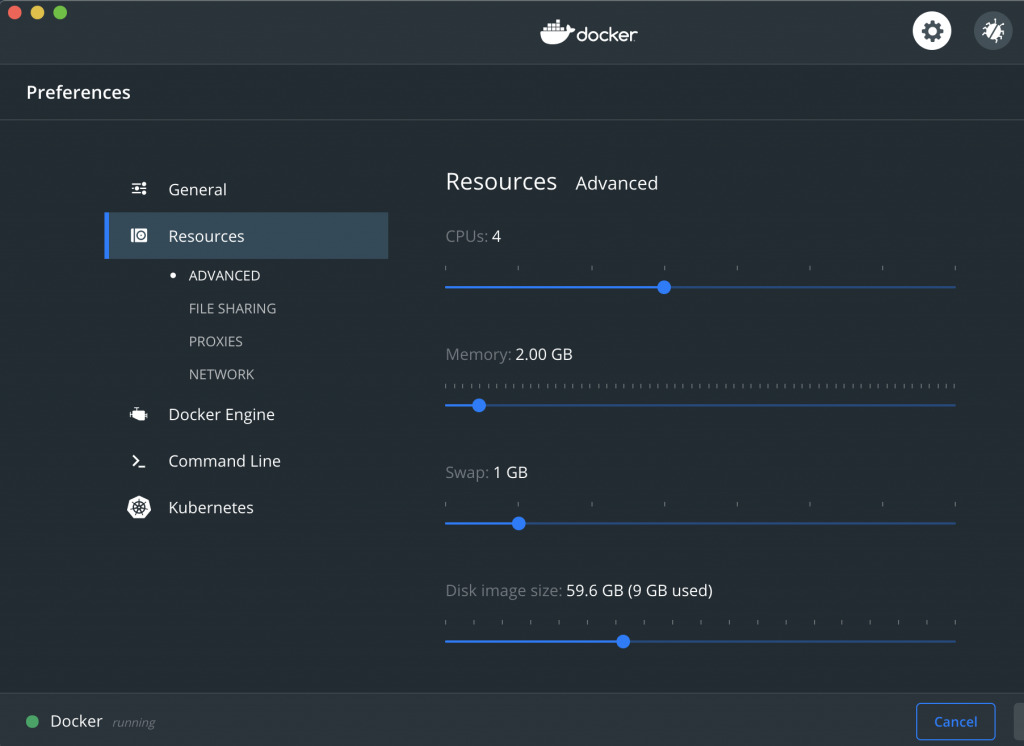

Afterward, this will take just a few seconds! If you are reading this blog post for the first time, this will take you about five minutes. We will run the Confluent Kafka Music demo application in a containerized, multi-service deployment, using Docker. This Docker-based demo is the focus of this blog post and, because the demo is a one-click experience, the remainder of this post will be quite short and concise! Creating the Kafka Music Demo In order to make your getting started experience even better, we recently added a new Docker-based demo setup. To get started with the Kafka Streams API, most users typically begin with our Confluent demo applications or the Kafka Streams API chapter in the Confluent documentation. And-speaking of containers and Docker-this brings us to the focus of this blog. If you do like containers and enjoy deploying to Kubernetes or a cloud service like AWS EC2, no problem either. For example, if you don’t like containers but prefer deploying to VMs with Puppet or Ansible, no problem. (And as a side note, these applications are backwards and forwards compatible with Kafka cluster versions, making such deployment super-flexible to accommodate for independently working teams across a company.) This also means you are able to use the same organizational processes and technical tooling for development, testing, packaging, deployment, and monitoring of the Kafka Streams applications just like you do everywhere else inside your company.

Mac os docker getting started install#

And yes, unlike related technologies such as Apache® Spark™ or Apache Flink®, where you must install and run special processing clusters into which you then submit cluster-specific “processing jobs,” you actually can containerize applications that use the Kafka Streams API because these are standard Java applications. Īdditionally, Docker is also a very popular choice among Kafka users for containerizing and deploying applications and microservices on platforms such as Kubernetes or in the cloud. Many developers love container technologies such as Docker and the Confluent Docker images to speed up the iterative development they’re doing on their laptops: for example, to quickly spin up a containerized Confluent Platform deployment consisting of multiple services such as Apache Kafka, Confluent Schema Registry, and Confluent REST Proxy for Kafka. Docker and Kafka Streams API: A Perfect Match Our own GitHub repo containing the Confluent demo applications uses exactly such a setup. And they also use the same setup for automated integration testing in CI environments backed by Jenkins or Travis CI. Some users, for example, opt to test-drive and develop their applications on their laptops against embedded, in-memory instances of Kafka and related services such as Confluent Schema Registry.

In fact, it’s pretty common for our users to have their first application or proof-of-concept running in a matter of minutes. Unlike competing technologies, Apache Kafka ® and its Streams API does not require installing a separate processing cluster, and it is equally viable for small, medium, large, and very large use cases. What’s great about the Kafka Streams API is not just how fast your application can process data with it, but also how fast you can get up and running with your application in the first place-regardless of whether you are implementing your applications in Java or other JVM-based languages such as Scala and Clojure.